What Is AI Interpretability?

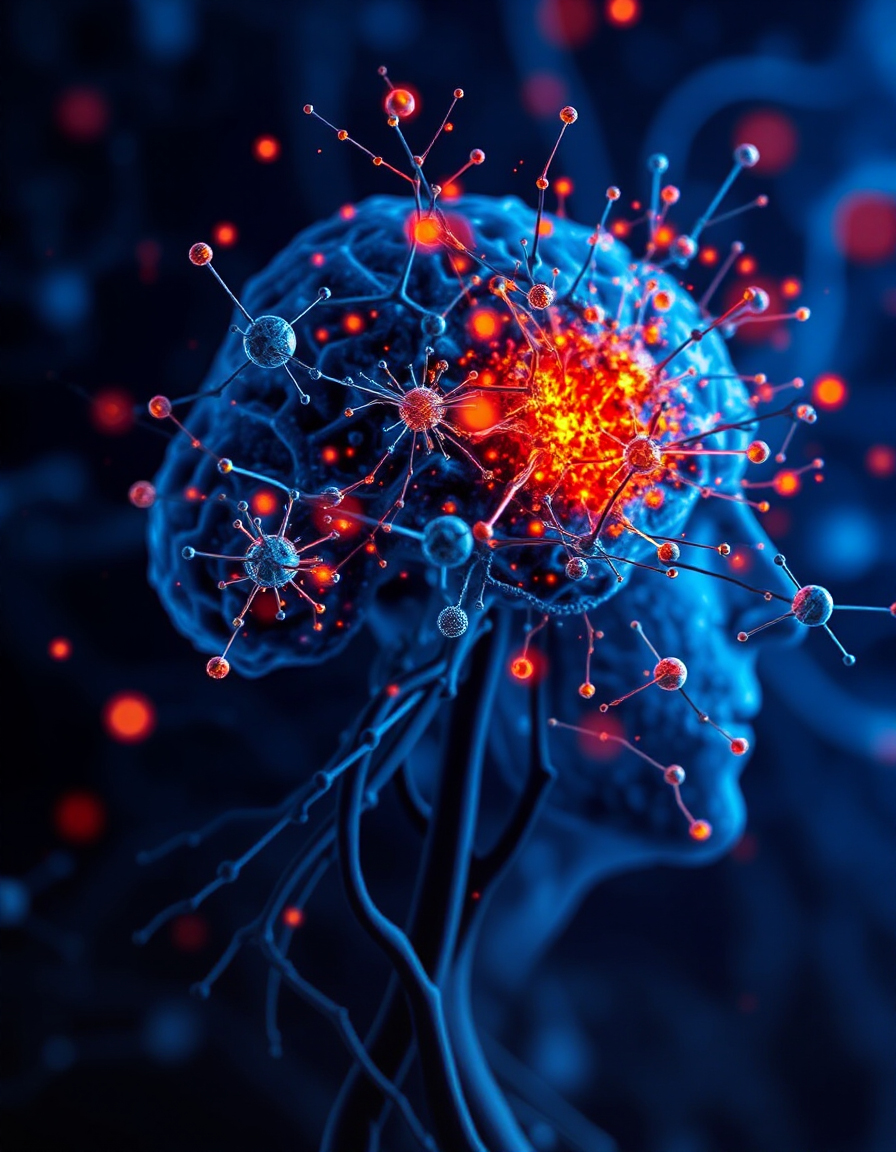

AI interpretability is the science of understanding how artificial intelligence models make decisions. Instead of treating AI like a black box, interpretability seeks to explain which patterns, neurons, and signals are responsible for specific outputs.

From Neural Networks to Human-Like Concepts

In 2025, researchers are bridging the gap between raw neural activity and understandable ideas. By mapping individual neurons and features inside large models like Claude, scientists can now trace how the model arrives at certain thoughts, behaviors, or responses.

Anthropic’s Approach to Decoding Claude

Anthropic pioneered a method using dictionary learning to identify millions of distinct features within Claude’s neural network. These features map to real-world ideas like “anger,” “code syntax,” or “famous locations.” This creates a pathway to understand how neurons generate meaning.

Turning Features Into Insights

Each feature acts like a mini-idea inside the model. When a certain feature activates—say for “sarcasm” or “Golden Gate Bridge”—it influences Claude’s output. This activation explains why Claude says what it says, and researchers can adjust or isolate these features to study their effects.

The Power of Semantic Structure

One of the most fascinating discoveries is that Claude organizes its features in structured, semantic clusters. Similar concepts appear grouped together, mimicking how human memory and language are organized. It suggests large models form internal conceptual maps.

Why Interpretability Matters Now

As AI becomes more powerful, interpretability becomes essential. Understanding how models like Claude think helps ensure safety, accountability, and fairness. It allows humans to guide, improve, and monitor AI systems to align with ethical values.

The Future of Transparent AI

2025 marks a turning point where interpretability moves from theory to practice. Tools and techniques are evolving that allow us not only to observe AI thoughts, but to adjust them—making AI more like a partner we can understand and trust.

Conclusion

From neurons to meaning, the new science of AI interpretability is unlocking the black box of machine learning. By decoding internal processes, researchers are turning AI into a transparent and controllable tool—bringing us closer to safe, aligned, and meaningful artificial intelligence.

Related Reading.

- iPhone 17 Pro: The Future of AI-Powered Smartphones Begins

- How Anthropic Used Dictionary Learning to Decode Claude’s Mind.

- Inside Claude: Mapping Millions of AI Thoughts in 2025.

FAQs

1. What is AI interpretability and why is it important?

AI interpretability explains how models generate responses, helping developers trust and refine the system’s outputs.

2. How are neurons and features related in AI models like Claude?

Neurons activate patterns called features, which map to specific ideas or behaviors the AI has learned from data.

3. What role does dictionary learning play in interpretability?

Dictionary learning helps identify and label features within a model, making it easier to understand what each part of the AI does.

4. Can researchers really modify AI thoughts by adjusting features?

Yes, they can amplify or suppress features to change outputs, showing direct control over model behavior.

5. How does interpretability improve AI safety?

It helps detect harmful behavior, reduce bias, and provide explanations for decisions—making AI more reliable and ethical.